Deep learning has long operated in a curious limbo—practitioners achieve remarkable results through experimentation and intuition, yet fundamental questions about why these systems work remain largely unanswered. The field is now experiencing a maturation phase where researchers are building rigorous mathematical theories to explain neural network behavior, moving beyond black-box empiricism toward reproducible, predictive science.

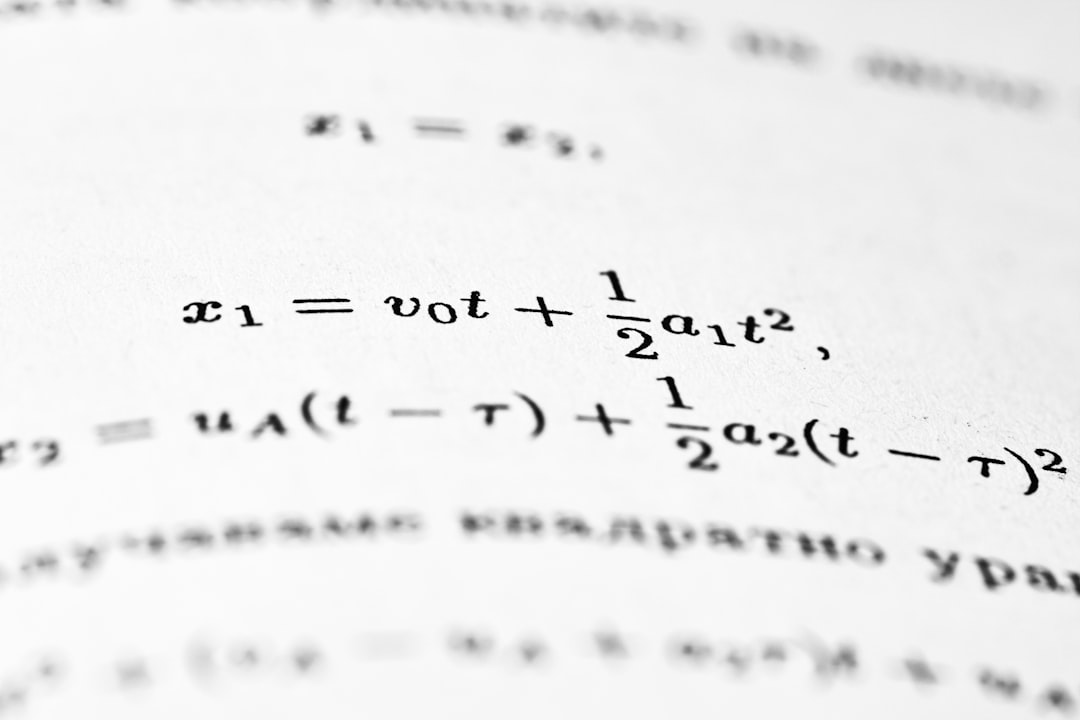

Recent advances in areas like the neural tangent kernel (NTK) theory, loss landscape analysis, and implicit regularization are providing concrete mechanisms for understanding training dynamics. These theoretical frameworks don't just describe what happens; they enable developers to predict generalization bounds, optimize architecture choices, and understand failure modes before deployment. When you can mathematically prove that a particular training regime will converge to solutions with specific properties, you're no longer guessing—you're engineering.

For developers building production systems, this theoretical shift has immediate practical implications. Understanding the implicit biases of gradient descent, the role of overparameterization, and how data distribution affects learning curves translates directly into better hyperparameter selection, more efficient architectures, and improved robustness guarantees. APIs and frameworks are beginning to expose these theoretical insights, allowing engineers to make informed decisions grounded in mathematics rather than folklore.

The emergence of a true scientific theory of deep learning won't eliminate empirical validation—it will enhance it. With predictive theory, you can design experiments that test specific hypotheses about your models' behavior. This convergence of theory and practice represents a maturation of the field that will ultimately make AI systems more reliable, interpretable, and trustworthy in critical applications.